‘Deepfake’ uses artificial intelligence to create morphed media files like photos or videos, using sophisticated media technology, largely used for pornography, atrocious character assassinations on public figures etc.

More than 80% of our beliefs come from ‘seeing’ and next comes ‘hearing’. Topics that human mind is highly vulnerable to, especially explicit sexual content like pornography creates tremendous impact in our belief system. The biggest impact is the dangerous effect it has while watching such sexual content – the normal analyzing, logical capabilities are skipped and the judgments are formed in extreme short amount of time in our belief system. After that, even though one part of mind cognizes it as fake, a large portion of the mind starts believing unconsciously that its true. Changing these judgments is not easily possible with a normal human effort, it requires tremendous powerful meditation practices.

Deepfake uses artificial intelligence to create morphed media files like photos or videos. The term is a derived from deep learning and the English word fake. In most cases, other video editing technologies are used.

Early January 2018, internet witnessed an explosion in what has become known as deepfakes: pornographic videos manipulated so that the original actress’s face is replaced with somebody else’s.

Early January 2018, internet witnessed an explosion in what has become known as deepfakes: pornographic videos manipulated so that the original actress’s face is replaced with somebody else’s.

Actor Nicholas Cage was morphed into female bodies after various challenges had been thrown on online communities such as Reddit. Deepfake discussion and videos came under heavy scrutiny after this and were being actively filtered and moderated from Reddit, Facebook, and other forums. Reddit edited its rules as follows:

As of February 7, 2018, we have made two updates to our site-wide policy regarding involuntary pornography and sexual or suggestive content involving minors. These policies were previously combined in a single rule; they will now be broken out into two distinct ones. Communities focused on this content and users who post such content will be banned from the site

Just until a few days ago, users from the very the same online forums could be seen actively sharing the morphed video from 2010. Maybe slowly the internet community is learning the lesson of the risks of deepfake pornography, especially on lives of celebrities and public figures.

On 3 February 2018, an article on deepfake pornography problem had been posted by BBC, titled “Deepfakes porn has serious consequences.”

Deepfakes porn has serious consequences

In recent weeks there has been an explosion in what has become known as deepfakes: pornographic videos manipulated so that the original actress’s face is replaced with somebody else’s.

As these tools have become more powerful and easier to use, it has enabled the transfer of sexual fantasies from people’s imaginations to the internet. It flies past not only the boundaries of human decency, but also our sense of believing what we see and hear.

Beyond its use for hollow titillation, the sophistication of the technology could bring about serious consequences. The fake news crisis, as we know it today, may only just be the beginning.

Several videos have already been made involving President Trump’s face, and while they are obvious spoofs, it’s easy to imagine the effect being produced for propaganda purposes. One video created with the deepfake technology turns Donald Trump into Dr Evil from the Austin Powers films.

As is typical, institutions and companies have been caught unaware and unprepared. The websites where this kind of material has begun to proliferate are watching closely. But most are clueless about what to do, and nervous about the next steps.

Within communities experimenting with this technique, there is excitement as famous faces suddenly appear in an unlikely “sex tape”.

Only rarely do we see flickers of a heavy conscience as they discuss the true effects of what they are doing. Is creating a pornographic movie using someone’s face unethical? Does it really matter if it is not real? Is anyone being hurt?

Several Game of Thrones actresses, including Natalie Dormer, have had their features placed in pornographic clips

Perhaps they should ask: How does this make the victim feel?

As one user on Reddit put it, “this is turning into an episode of Black Mirror” – a reference to the dystopian science-fiction TV show.

How are deepfakes created?

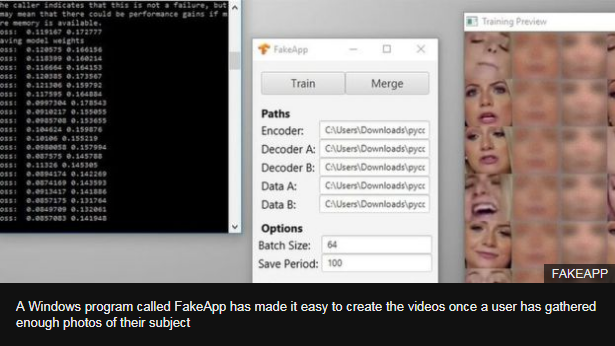

One piece of software commonly being used to create these videos has, according to its designer, been downloaded more than 100,000 times since being made public less than a month ago.

Doctoring sexually explicit images has been happening for over a century, but the process was often a painstaking one – considerably more so for altering video. Realistic edits required Hollywood-esque skills and budgets.

But by using machine learning, that editing task has been condensed into three user-friendly steps: Gather a photoset of a person, choose a pornographic video to manipulate, and then just wait. Your computer will do the rest, though it can take more than 40 hours for a short clip.

The most popular deepfakes feature celebrities, but the process works on anyone as long as you can get enough clear pictures of the person – not a particularly difficult task when people post so many selfies on social media.

The technique is drawing attention from all over the world. Recently there has been a spike in searches for “deepfake” coming from internet users in South Korea. Spurred, it can be assumed, by the publishing of several manipulated videos depicting 23-year-old K-Pop star Seolhyun.

“This feels like it should be illegal,” read a comment from one viewer. “Great work!”

Celebrities targeted

There are some celebrities in particular that seem to have attracted the most attention from deepfakers.

It seems, anecdotally, to be driven by the shock factor: the extent to which a real explicit video involving this subject would create a scandal.

Fakes depicting actress Emma Watson are among the most popular on deepfake communities, alongside those involving Natalie Portman.

But clips have also been made of Michelle Obama, Ivanka Trump and Kate Middleton. Kensington Palace declined to comment on the issue.

Gal Gadot, who played Wonder Woman, was one of the first deepfakes to demonstrate the possibilities of the technology.

An article by technology news site Motherboard predicted it would take a year or so before the technique became automated. It ended up taking just a month.

And as the practice draws more ire, some of the sites facilitating the sharing of such content are considering their options – and taking tentative action.

A Windows program called FakeApp has made it easy to create the videos once a user has gathered enough photos of their subject. Gfycat, an image hosting site, has removed posts it identified as being deepfakes – a task likely to become much more difficult in the not-too-distant future.

Reddit, the community website that has emerged as a central hub for sharing, is yet to take any direct action – but the BBC understands it is looking closely at what it could do.

A Google search for specific images can often suggest similar posts due to the way the search engine indexes discussions on Reddit.

Google has in the past altered its search results in order to make it more difficult to find certain types of material – but it is not clear if Google is considering this kind of step at this early stage. Like the rest of us, these companies are only just becoming aware this kind of material exists.

In recent years, these sites have wrestled with the problem of so-called “revenge porn”, real images posted without the subject’s consent as a way to embarrass or intimidate. Deepfakes add a new layer of complexity to what could be used to harass and shame people. A video may not be real – but the psychological damage most certainly would be.

Political abuse

It is a tech journalism cliche to say that one of the biggest drivers of innovation has historically been the porn business – whether it improved video compression, or was instrumental in the success of home video cassettes.

As was the case then, what has begun here with porn could reach into other facets of life.

In a piece for The Outline, journalist Jon Christian puts out a worst case scenario, that this technology “could down the road be used maliciously to hoax governments and populations, or cause international conflict”.

It is not a far-fetched threat. Fake news – whether satirical or malicious – is already shaping global debate and changing opinions, perhaps to the point of swaying elections.

Combining advancements in audio technology, from companies such as Adobe, could combine fakery for both eyes and ears – tricking even the most astute news watcher.

But for now, it is mostly porn. Those experimenting with this software do not skirt the issue.

“What we do here isn’t wholesome or honourable, it’s derogatory, vulgar, and blindsiding to the women that deepfakes works on,” wrote one user on Reddit, before concocting the laughable suggestion that deepfakes might actually diminish the impact of revenge porn.

“If anything can be real, nothing is real,” the user added.

“Even legitimate homemade sex movies used as revenge porn can be waved off as fakes as this system becomes more relevant.”

These kind of justification gymnastics are of course designed to protect the mental well-being of those who create this material, rather than those who are featured in it.

But the deepfake community is right about one thing: the technology is here, and there is no going back.

Former President Barack Obama’s Deepfake video

Would former president Barack Obama really call Trump a “dipshit”? (I mean, in public?) Would he close an address with “Stay woke, bitches”? Probably not — and he certainly wouldn’t say “Killmonger was right.” But in the fake Obama video from Oscar-winning director and comedian Jordan Peele, the former president says all those things. It’s not just a funny bit — though it is pretty hilarious — but a warning about how making believable-looking yet fake videos are increasingly easy to do.

The video at first looks like one of Obama’s normal addresses. (The fact that it appears to have been shot in the White House is your first clue, though we doubt Trump would let Obama back in, even to shoot a minute-long video.) But Obama says increasingly strange things, and soon the video turns split-screen, with Obama on the left, Peele on the right. Peele continues with his (superb) Obama impression, explaining that it’s all fake.